Welcome to Test Automation Workshop

It's a great pleasure for me to share our latest event with you all. I am Moses Kim from the Software Engineer in Test team at LINE. Last October, test automation teams from LINE's global offices gathered at LINE Tokyo office for a workshop — Test Automation Workshop 2018 — for two days from the 18th.

A common goal for the test automation teams is "Closing the distance between development and testing". Based on this mission, we strive to advance the quality of LINE's products and support and enable our developers to focus more on creating values for LINE users, through providing test automation for various use cases. The Test Automation Workshop (TAW, hereinafter) began in June 2017, and has continued ever since, in various locations as listed below, for us test automation engineers to gather and share such use cases and ideas.

- June 2017 - LINE Taiwan office

- January 2018 - LINE Fukuoka office

- October 2018 - LINE Tokyo office

Grow with test automation

With the LINE messenger at the core, each global office is propagating business all in different environments, and such variety affects testing. A notable outcome of the workshop was an insight that could enable all of us to advance together, including testing teams, automation teams and even our development teams. At this workshop, we defined a code of conduct for us, as shown below, with the slogan, "Grow with Test Automation".

- We promote self-growth through test automation.

- We challenge the difficulties together through sharing our experiences and knowledge and also through understanding and encouraging each other.

- We widen our view not only on technical aspects but process-wise as well.

Here are some numbers regarding the workshop.

- Date: October 18, 2018 (Thursday) – October 19, 2018 (Friday)

- Number of sessions: 10

- Number of attendants: 42 in total

- From Korea: 10

- From Taiwan: 10

- From Fukuoka, Japan: 7

- From Tokyo, Japan: 7

- From Thailand: 3

- From Hanoi, Vietnam: 5

Sessions

Numerous sessions were presented at the TAW. Altogether, ten sessions were presented; every single session was extremely helpful, we all learnt a lot for sure.

| Day | Title | Speaker | Speaker from |

|---|---|---|---|

| 1 | Ayaperf: New Performance Testing Framework for Microservices | Ryo Ikeda | Tokyo, Japan |

| How QA Improves Development Velocity | Krittidech Pomuang, Auttaporn Tantipichainkun | Thailand | |

| Introduction of Test Automation Sessions at STAREAST | Akari Ikenoue | Tokyo, Japan | |

| Test Automation using Cypress | Pei-Yun Lee, Miki Liao | Taiwan | |

| QA in Agile with Android and iOS Native Test Frameworks | Kuan-Wei Lin | Taiwan | |

| Effective Code Review & Metrics | Po-Feng Liu | Taiwan | |

| The Growth of Test Automation in LINE Tech Vietnam | Mai Hanh, Huong Trang | Hanoi, Vietnam | |

| 2 | Saving Release Master: Deployment Process Automation | Moses Kim | Korea |

| Database Testing | Pham Thi Mai, Le Thi Thoa | Hanoi, Vietnam | |

| Test Multiple Devices with JUnit5 | Yuhao Chang | Fukuoka, Japan |

Day 1

Seven sessions were presented on day 1, all in English, and a series of questions followed the end of all session. Having a reasonably scaled workshop, we enjoyed each other's company and got to know what others were doing in each office.

Ayaperf: New performance testing framework for microservices

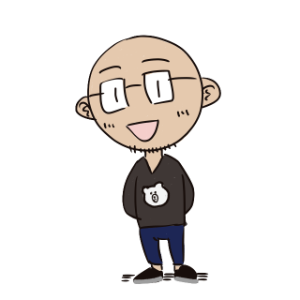

The speaker, Ryotaro Ikeda, is a member of a Software Engineer in Test TF (Task Force) in Japan. Ikeda introduced Ayaperf, LINE's own framework for effective load and performance testing. Ayaperf is a combination of a load testing framework that is similar to JUnit and the distributed test framework of Kubernetes. Load testing scenarios for Ayaperf can be written similarly to that of JUnit tests, and load testings can be done both locally and remotely.

The speaker, Ryotaro Ikeda, is a member of a Software Engineer in Test TF (Task Force) in Japan. Ikeda introduced Ayaperf, LINE's own framework for effective load and performance testing. Ayaperf is a combination of a load testing framework that is similar to JUnit and the distributed test framework of Kubernetes. Load testing scenarios for Ayaperf can be written similarly to that of JUnit tests, and load testings can be done both locally and remotely.

Test clients containing test scenarios are deployed on k8s (Kubernetes). You can easily create loads on a target service regardless of the number of clients. Test results are aggregated on a Prometheus pod and exposed to Grafana.

How QA improves development velocity

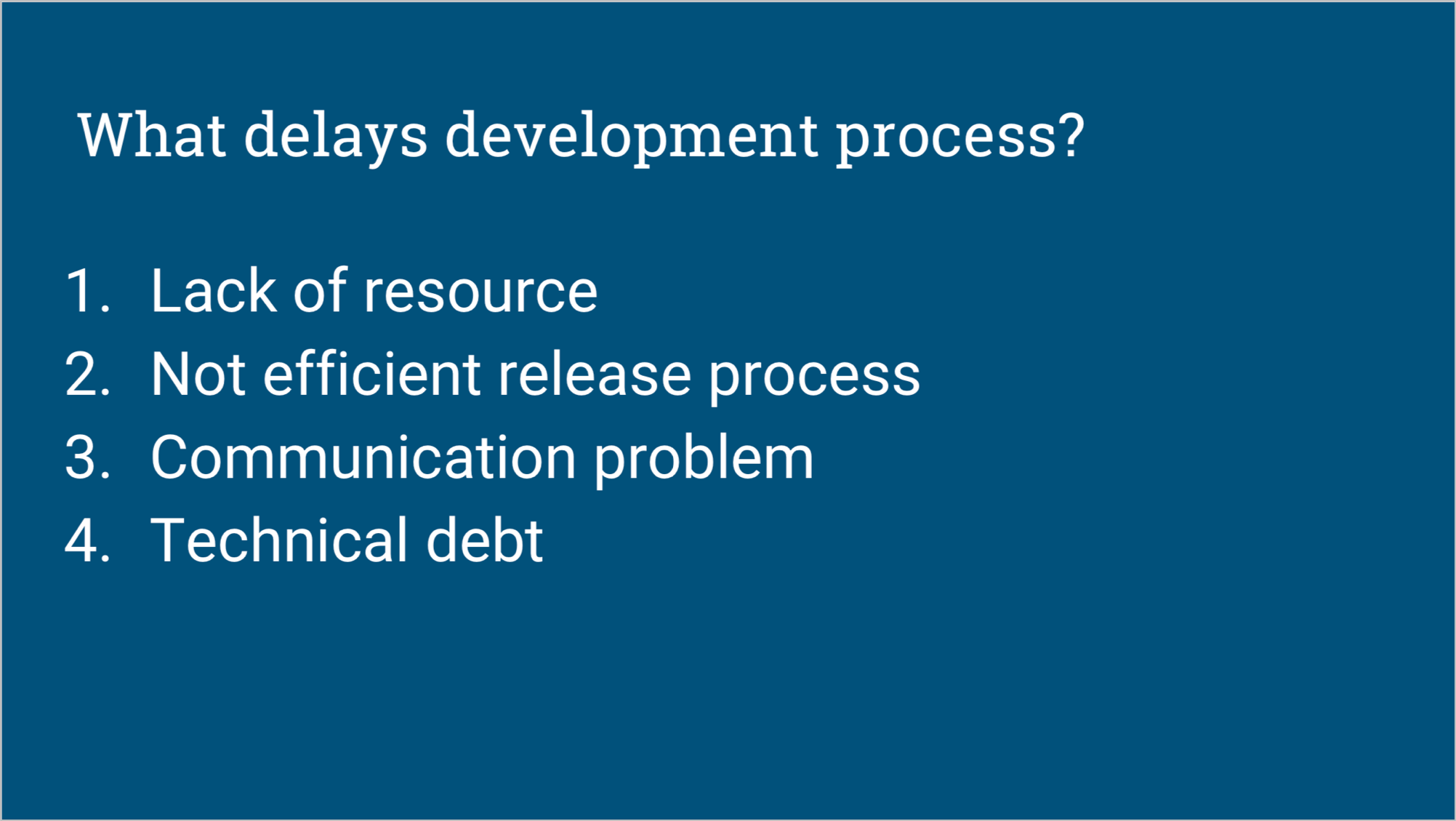

The speakers, Krittidech Pomuang and Autuaporn Tantipichainkun, are from the Quality Management team at LINE Thailand. They introduced the QA process that enhanced the velocity of development processes; they made improvements in the four key elements that delay development — lack of resources, inefficient release process, communication issues, and technical debt.

The speakers, Krittidech Pomuang and Autuaporn Tantipichainkun, are from the Quality Management team at LINE Thailand. They introduced the QA process that enhanced the velocity of development processes; they made improvements in the four key elements that delay development — lack of resources, inefficient release process, communication issues, and technical debt.

Their choice was faker.js, to efficiently obtain the data for testing. To improve UI testing, they changed the structures of code and test scripts. As a result, they reduced testing duration by more than 25%. Also, by starting automated testing in advance in their development phase, they were able to spare resources, too, which in turn was spent in more communication with developers.

Introduction of test automation sessions at STAREAST

This session was prepared by Akari Ikenoue, a member of the Service QA team in Japan. She shared the two insights she has gained at STAREAST held in April 2018. This year, about 12.7% of the sessions were about AI, whereas there were none in 2017. AI must have finally landed in the world of testing. She realized how AI will affect testing industry, especially in test automation.

This session was prepared by Akari Ikenoue, a member of the Service QA team in Japan. She shared the two insights she has gained at STAREAST held in April 2018. This year, about 12.7% of the sessions were about AI, whereas there were none in 2017. AI must have finally landed in the world of testing. She realized how AI will affect testing industry, especially in test automation.

One of the most impressive affects of AI in action for test automation was that integrating machine learning with test automation framework — framework for running tests through UI element detection, for example, Selenium and Appium. The integration can resolve the following four major problems found in using test automation frameworks:

- Having to write the test code yourself

- Having to identify UI elements

- Having to assign test steps

- Low reusability

Test automation using Cypress

The presenters, Pei-Yun Lee and Miki Liao are members of the QA team at LINE Taiwan. They used Cypress for test automation. With the help of Cypress, they resolved the difficulties they had in their legacy operation — initial configuration, writing scripts and maintenance — and also composed an effective test harness under limited time and resources.

The presenters, Pei-Yun Lee and Miki Liao are members of the QA team at LINE Taiwan. They used Cypress for test automation. With the help of Cypress, they resolved the difficulties they had in their legacy operation — initial configuration, writing scripts and maintenance — and also composed an effective test harness under limited time and resources.

Cypress is a web-based end-to-end JavaScript testing framework that is independent of Selenium. It allows you to write and operate assertion in a simpler manner, by using Mocha or Chai. Thanks to Cypress, they increased the efficiency in controlling network traffic and testing APIs.

QA in Agile with Android and iOS native test frameworks

Kuan-Wei Lin is a member of LINE Taiwan QA team. He shared how he applied native test frameworks (Android's Espresso, iOS' XCTest) to improve test efficiency and realiability. These native test frameworks have distinctive strengths compared to third-party test frameworks; executing tests is faster and the support for perfect integration with development framework guarantees reliability.

Kuan-Wei Lin is a member of LINE Taiwan QA team. He shared how he applied native test frameworks (Android's Espresso, iOS' XCTest) to improve test efficiency and realiability. These native test frameworks have distinctive strengths compared to third-party test frameworks; executing tests is faster and the support for perfect integration with development framework guarantees reliability.

The native test frameworks helped increasing the test efficiency and reliability, and guaranteeing service quality through exploratory testing. To add, code coverage information was also obtainable which was used in identifying more targets that require regression tests.

Effective code review & metrics

Another QA member from LINE Taiwan, Po-Feng Liu, presented a session on improving code quality by visualizing indexes using a public dashboard and pull requests. You've probably heard this quote before; "If you cannot measure it, you cannot improve it." When we discuss about development outcome or improving something in the process of development, "code quality" is something that pops up frequently. But, then, we wonder how we'd determine that code quality has indeed improved.

Another QA member from LINE Taiwan, Po-Feng Liu, presented a session on improving code quality by visualizing indexes using a public dashboard and pull requests. You've probably heard this quote before; "If you cannot measure it, you cannot improve it." When we discuss about development outcome or improving something in the process of development, "code quality" is something that pops up frequently. But, then, we wonder how we'd determine that code quality has indeed improved.

Po-Feng shared his practice of utilizing SonarQube, a static analysis platform, to display pre-determined indexes on a public dashboard or on pull requests, to make code quality known clearly at any point of time. He's provided a commit template to help code reviewers to easily understand the code context and review.

The growth of test automation in LINE Tech Vietnam

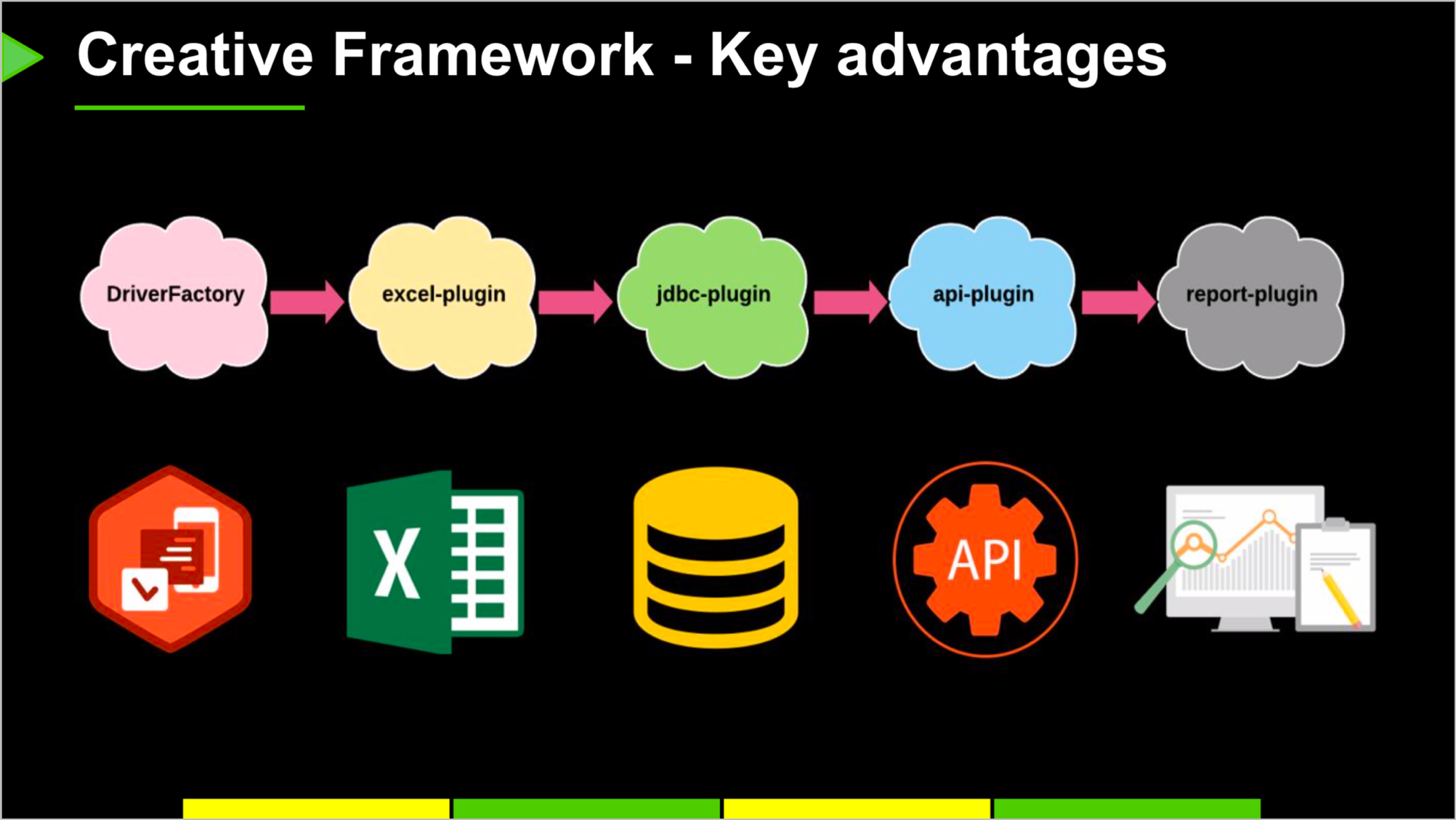

We also had presenters from Vietnam. Mai Hanh and Huong Trang are both working at the Software Development team at LINE Tech Vietnam. They shared a creative framework they designed to overcome the drawbacks of the legacy test framework which they used in the initial stage of testings.

We also had presenters from Vietnam. Mai Hanh and Huong Trang are both working at the Software Development team at LINE Tech Vietnam. They shared a creative framework they designed to overcome the drawbacks of the legacy test framework which they used in the initial stage of testings.

The following is what they earned through the new framework:

- Separate the process of handling input data and one for output data into two, with the help of a plugin, such as Excel.

- Help QA members to focus on writing test cases

- Accessing Selenium and Appium drivers directly

- Support for native Android apps as well as web browsers running on PC and WebView apps running on Android-based smart devices

Moreover, they no longer have to write test data into scripts by themselves, increasing the test efficiency. This new framework is to be applied on various bots LINE provides.

Day 2

On Day 2, we had three sessions as listed below.

- Saving Release Master: Deployment Process Automation - Moses Kim | Korea

- Database Testing - Pham Thi Mai & Le Thi Thoa | Hanoi, Vietnam

- Test Multiple Devices with JUnit 5 - Chang Yuhao | Fukuoka, Japan

Saving release master: Deployment Process Automation

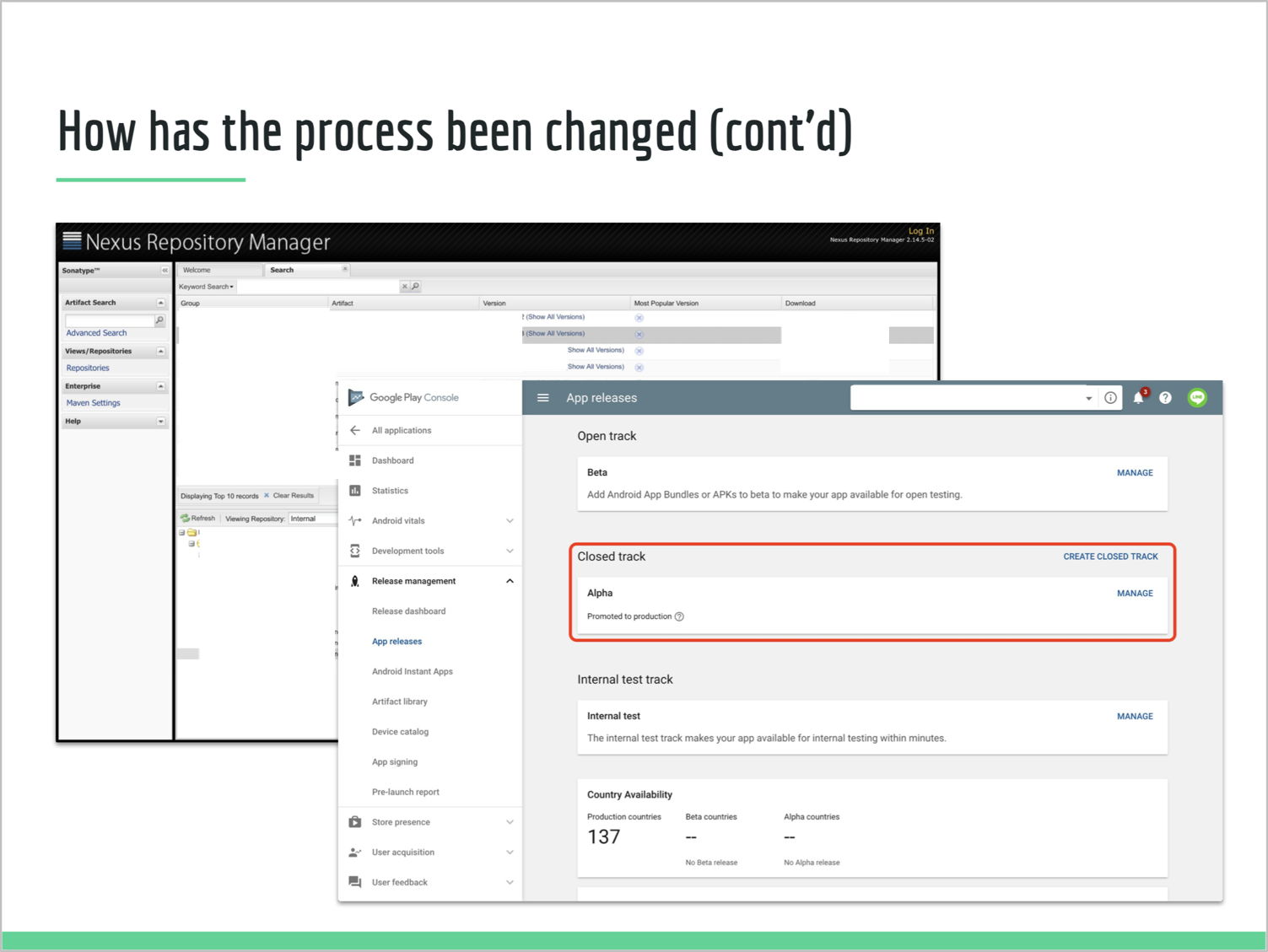

This is the session I presented. As I introduced myself earlier, I'm working at the SET team at LINE+ in Korea. What I shared is how we improved automatic distribution of Android build outcomes.

This is the session I presented. As I introduced myself earlier, I'm working at the SET team at LINE+ in Korea. What I shared is how we improved automatic distribution of Android build outcomes.

On top of test automation, we also support improving infrastructures for development, too. When building an apk file for beta or official releases, we made the process to automatically deploy the apk file plus symbolication and deobfuscation files on an error analysis system and Google Play store. This prevents missing out symbolication and deobfuscation, and also removes app distribution dependency. We used nelo2 (an error analysis system used at NAVER and LINE), the Google Play Developer Publishing API, and a Maven repository.

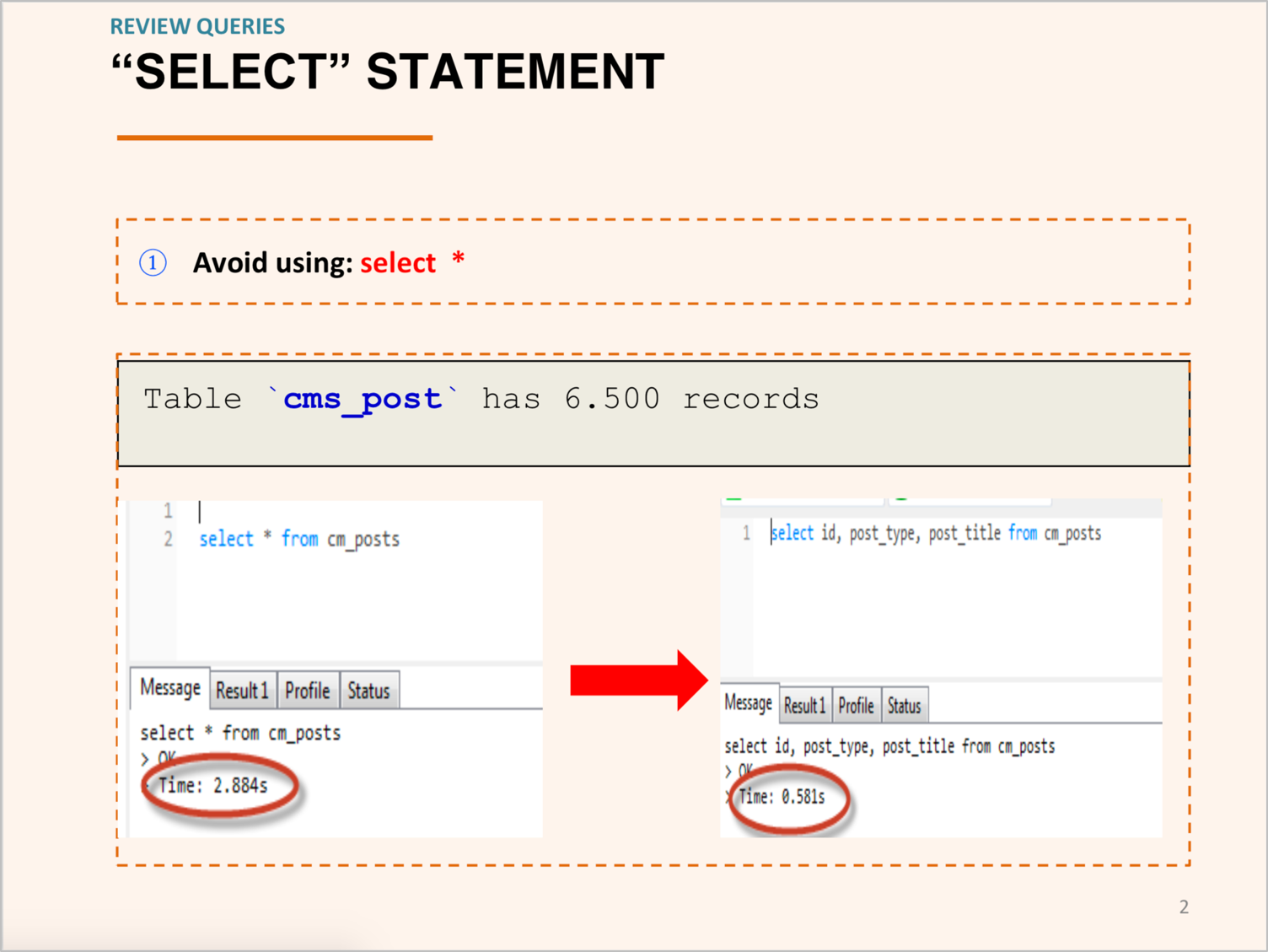

Database testing

This session was presented by Pham Thi Mai and Le Thi Thoa, from LINE Tech Vietnam's Software Development team. The topic was database testing, and there story was interesting in a way that the testing they focused on was not the ones triggered from outside a database for verifying data integrity. Instead, the tests they conducted was to review database design and database queries.

This session was presented by Pham Thi Mai and Le Thi Thoa, from LINE Tech Vietnam's Software Development team. The topic was database testing, and there story was interesting in a way that the testing they focused on was not the ones triggered from outside a database for verifying data integrity. Instead, the tests they conducted was to review database design and database queries.

Limitations that were not often considered in the DB designing phase were supplemented through DBA and QA peer reviews, and to improve SQL queries for CRUD, developers and QA members worked together.

To achieve their goal, reducing bugs found near the end of a development process and the cost for fixing them, they have tried to avoid data performance degradation by reviewing the design and queries early in the development phase. Based on the result, they have established a ground rule, which they have been applying in other development processes, too.

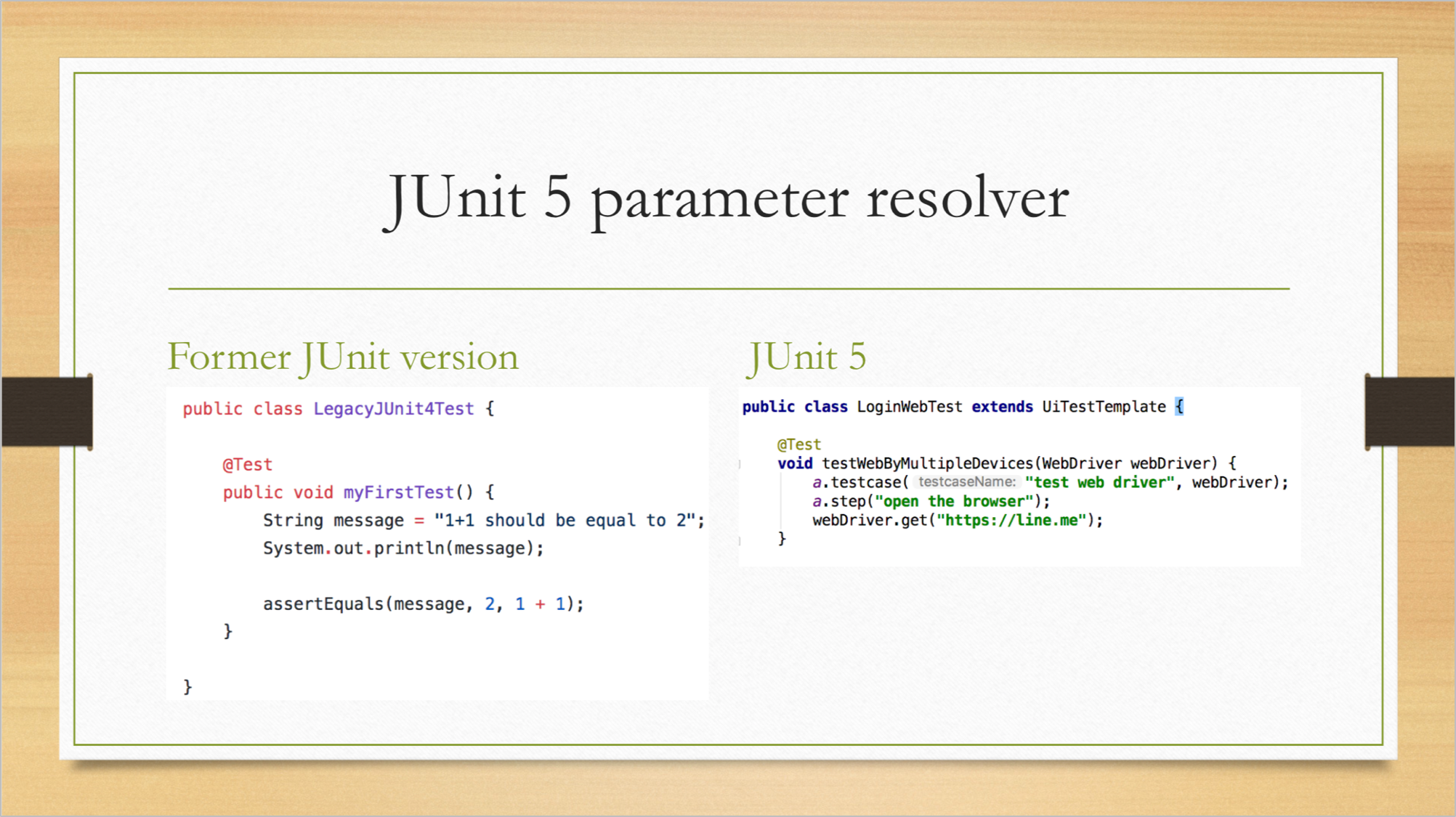

Test multiple devices with JUnit 5

Yuhao Chang is a member of the Test Automation team at LINE Fukuoka. He shared how he improved a test driver manager with JUnit 5. He's changed the base of the legacy test driver manager from a combination of JUnit 4, Appium, and Selenium to JUnit 5 base.

Yuhao Chang is a member of the Test Automation team at LINE Fukuoka. He shared how he improved a test driver manager with JUnit 5. He's changed the base of the legacy test driver manager from a combination of JUnit 4, Appium, and Selenium to JUnit 5 base.

According to him, JUnit 4-based test cases can be reused, with the help of JUnit Vintage and JUnit Jupiter API, and JUnit5-based test cases can be used, too. He showed how to use the new features of JUnit 5, extension model, parameter resolution, and custom tags for testing multiple types of devices.

Lastly

Two days felt a bit too short to share and learn experiences of each global office, but I have to say, it was a great opportunity to share our interests, issues and ideas.

There are still parts that remain to be explored in the field of test automation and it is quite difficult to pick a case and claim it as a perfect success. As mentioned earlier, services provided in each global office are unique, so we can't just apply one's practice on another. Test automation needs to be accepted in development processes and development culture, and only then we can truly make products and services that are testable.

Grow with Test Automation & Closing Distance between Development and Test.

We will surely find the way, as we always have. :)