Hello, I am HT and I work in B612 for Android development. B612, which is named after the asteroid B-612 from the novella "the Little Prince," is the world's first selfie app to feature pre-filtered selfie and 3 or 6 second collaged video capture. In this post, I would like to discuss the process of creating an MP4 file by making a video collage using MediaCodec in B612.

Determining video size

B612 features video capture, but real-time video encoding is difficult to implement because it requires a large amount of data processing. Data must either be drawn on the screen by being sent from the GPU memory to the system memory and then being processed on the hardware encoder, or by processing each pixel individually on the CPU into video data. As this process will need to run in real-time handling large amounts of data, it is better if the size of the data can be as small as possible. In order to create video information with a small amount of data, the size of the screen being read must be minimized so that only the required information is used. To achieve this, the resulting video size should be determined first and then the size of each individual video will be calculated. The video size will be determined based on this data, and will be encoded based on the size from that result.

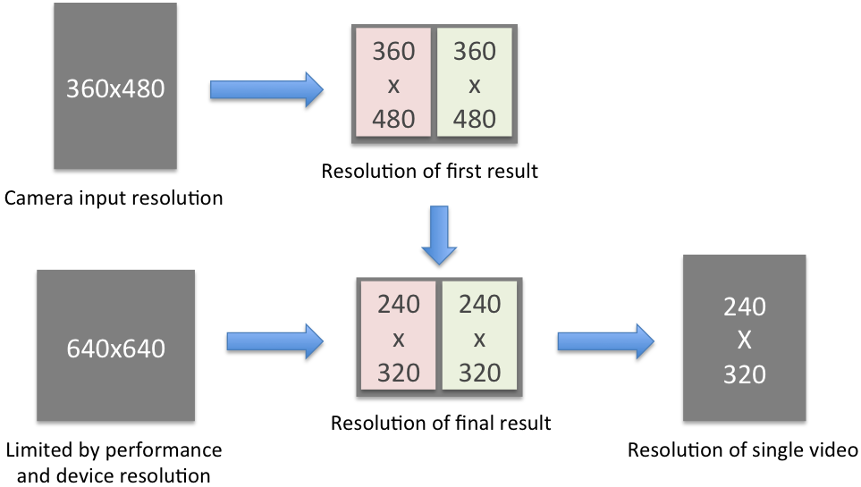

The size of the resulting video is determined by the collage chosen by the user. The image information from the camera will be arranged in the shape of the collage, matching the number of grids horizontally, and vertically. The size here will be the maximum size of the first resulting video. For example, if the pixel size of the image being sent from the camera is 320x480, and the collage is in the form of 1x2, the resulting image will be 320x960 with one image placed horizontally and two images placed vertically. The resulting video will then be reduced and resized to the maximum aspect ratio that the device is capable of displaying. Considering that this process needs to run in the graphic library, (GL) the maximum size of the video must be smaller than the maximum texture size that the GL can handle. Depending on the device, resolutions that are too large may not be able to be processed in real-time, so maximum resolutions should be reduced.

Once the size of the first resulting video is determined, the size of the original videos will be each determined based on this information. The resulting video will be horizontally and vertically divided by the number of the collage, and will be resized to be multiples of 16. This is because videos commonly use macro blocks of 16x16 pixels, and resolutions that are multiples of 16 generally work best on all devices.

Initializing MediaCodec and determining decoder type

MediaCodec can be initialized with the following code.

mediaCodec = MediaCodec.createEncoderByType("video/avc");

MediaFormat mediaFormat = MediaFormat.createVideoFormat("video/avc", width, height);

mediaFormat.setInteger(MediaFormat.KEY_BIT_RATE, bitRate);

mediaFormat.setInteger(MediaFormat.KEY_FRAME_RATE, frameRate);

mediaFormat.setInteger(MediaFormat.KEY_COLOR_FORMAT, MediaCodecInfo.CodecCapabilities.COLOR_FormatSurface);

mediaFormat.setInteger(MediaFormat.KEY_I_FRAME_INTERVAL, frameInterval);

mediaCodec.configure(mediaFormat, null, null, MediaCodec.CONFIGURE_FLAG_ENCODE);

As seen in the code above, the code used to generate encoders has been declared so that it will create an H.264 video with the appropriate "video/avc" MIME value. Also, the appropriate bitrate and framerate will be sent to the generated encoder so that the video can be created in the desired format. The color format sends COLOR_FormatSurface so that it can be encoded through Surface. While it is possible to add other color format options, only options provided in MediaCodec should be added if Surface is not used. However, with so many devices and so many color formats, the color format input data must be customized for each device. Since images with different color formats need the appropriate image data placement when they are combined into a collage, additional code is needed to accommodate for the color formats that an Android device supports. This leads to more development resources being used, and that is why we opted to generate MediaCodec encoders that use Surface instead.

The speed of the MediaCodec decoder will depend on the performance of the hardware it is running on. When combining collages that are small individually, but contains several videos, it may be more efficient to use software decoding more than hardware decoding. Also, using a hardware decoder codec may cause unexpected behavior on certain devices running a certain amount of instances of MediaCodec. That is why for devices that required more stability, we opted to use the OMX.google.h264.decoder option on the software decoder provided by Google. This way, multiple videos could be decoded more quickly in a stable environment.

Multi-threaded parallel decoding

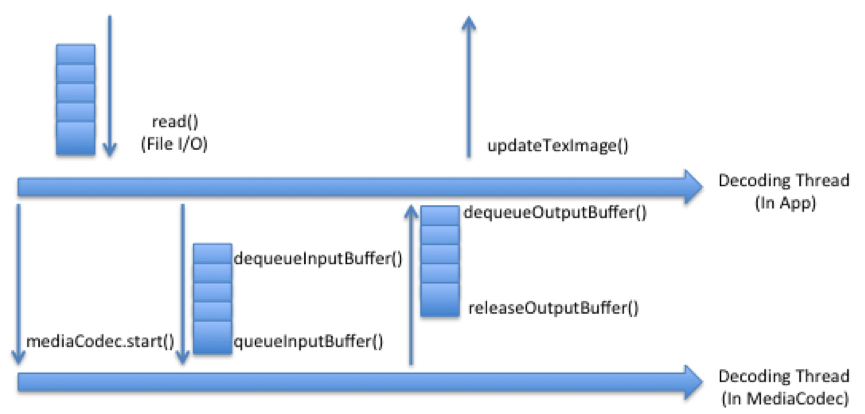

MediaCodec is designed to encode and decode even on single threads, with timeout values provided from functions such as dequeueOutputBuffer. Setting a low timeout value will allow access to several decoders without threads being blocked for too long even on single threads. Although even when using this method, there will be an unavoidable slight delay, making it more effective to use multi-threaded video processing. That is why we chose to allocate a thread to each video decoding MediaCodec, so that video could be processed quickly even if there was a block in MediaCodec. Figure 2 below, illustrates the interaction process between the generated decoding threads and the internal thread of MediaCodec. While using individual threads in this way may have an advantage in performance, this may backfire by overloading the memory with too many images when the encoder cannot catch up with the speed of the decoder if all threads are working individually. To prevent this, we put in a buffer between the images being generated by the decoder and limited the size of these decoded images so that they would not overload the capacity. Through this method, decoding will only continue until the buffer is reached when encoding speed is slower than the decoding speed. After the encoding process empties the buffer, decoding will recommence. This way, memory can be prevented from being unnecessarily wasted.

Video synthesis using Surface

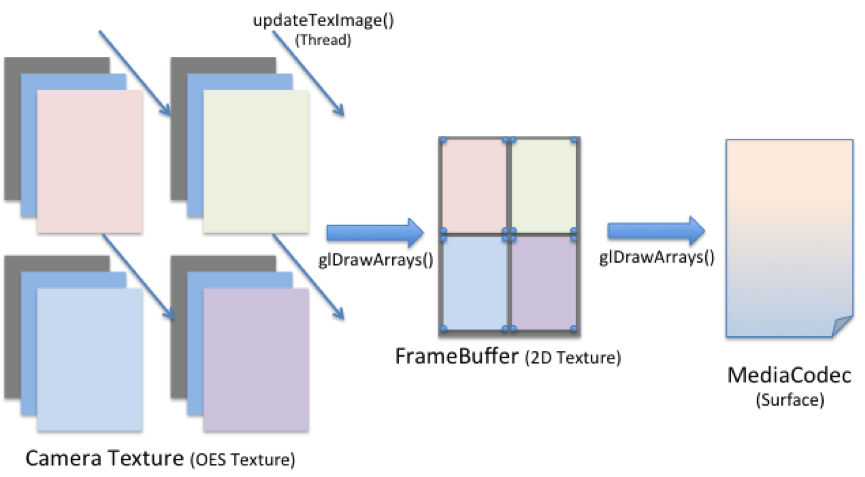

As mentioned in "Initializing MediaCodec and determining decoder type" above, the encoder is set to receive data from Surface during the encoding process. Before the data is sent to this encoder, the resulting image must be ready in the desired shape and design. To get this image, we rendered video in the shape that we wanted by allocating FrameBuffers. The result from the multi-threaded decoding from above is then updated as individual textures in the GL thread through the updateTexImage function. Later, we set vertex coordinates for these textures so that they would be arranged in the way we wanted in the FrameBuffer. Once the resulting video is drawn based on these vertex coordinates through the glDrawArrays function, the desired result can be achieved. While each individual video may slightly differ in length, they will be combined into one frame set to the desired frames per second (FPS).

Generating MP4 files using MediaMuxer

An encoded result that is only made of H.264 raw data does not contain information such as audio, and cannot be played in common players since additional information needed for playback cannot be included. In order to play the encoded result it the result screen or in another application, the encoded result must be inserted into a container format such as MP4. MediaMuxer, which is included in Android, can be used to generate MP4 files. The process can be achieved with the following code.

muxer = new MediaMuxer(path, MediaMuxer.OutputFormat.MUXER_OUTPUT_MPEG_4);

trackIndex = muxer.addTrack(newFormat); muxer.start();

// Repeatedly call the code below to record data on the MP4 file.

muxer.writeSampleData(trackIndex, encodedData, bufferInfo);

muxer.stop();

muxer.release();

After generating MediaMuxer with the code above, the video and audio must be inserted as separate tracks. However, since B612 does not need audio data during video synthesis, the audio data is only added if the user chooses to select audio to be added as a track while generating a file.

Conclusion

In this blog post, we took a look at how we used MediaCodec, how we improved speed with multiple threads, and how a collage is synthesized. With the process above, we were able to use hardware encoders instead of software codecs, to provide a more faster encoding speed to our users. It was our top priority to create an app that could maintain top performance without needlessly using up memory. And thanks to the multi-threaded process that minimizes resizing, we were able to achieve our goals.